Option to attach elastic inference to an EC2 instance · Issue #8157 · hashicorp/terraform-provider-aws · GitHub

![NEW LAUNCH!] Introducing Amazon Elastic Inference: Reduce Deep Learning Inference Cost up to 75% (AIM366) - AWS re:Invent 2018 | PPT NEW LAUNCH!] Introducing Amazon Elastic Inference: Reduce Deep Learning Inference Cost up to 75% (AIM366) - AWS re:Invent 2018 | PPT](https://image.slidesharecdn.com/new-launch-introducing-amaz-dc7595e2-98da-40f8-aaa2-895420541d29-457215190-181202043444/85/new-launch-introducing-amazon-elastic-inference-reduce-deep-learning-inference-cost-up-to-75-aim366-aws-reinvent-2018-14-320.jpg?cb=1667365044)

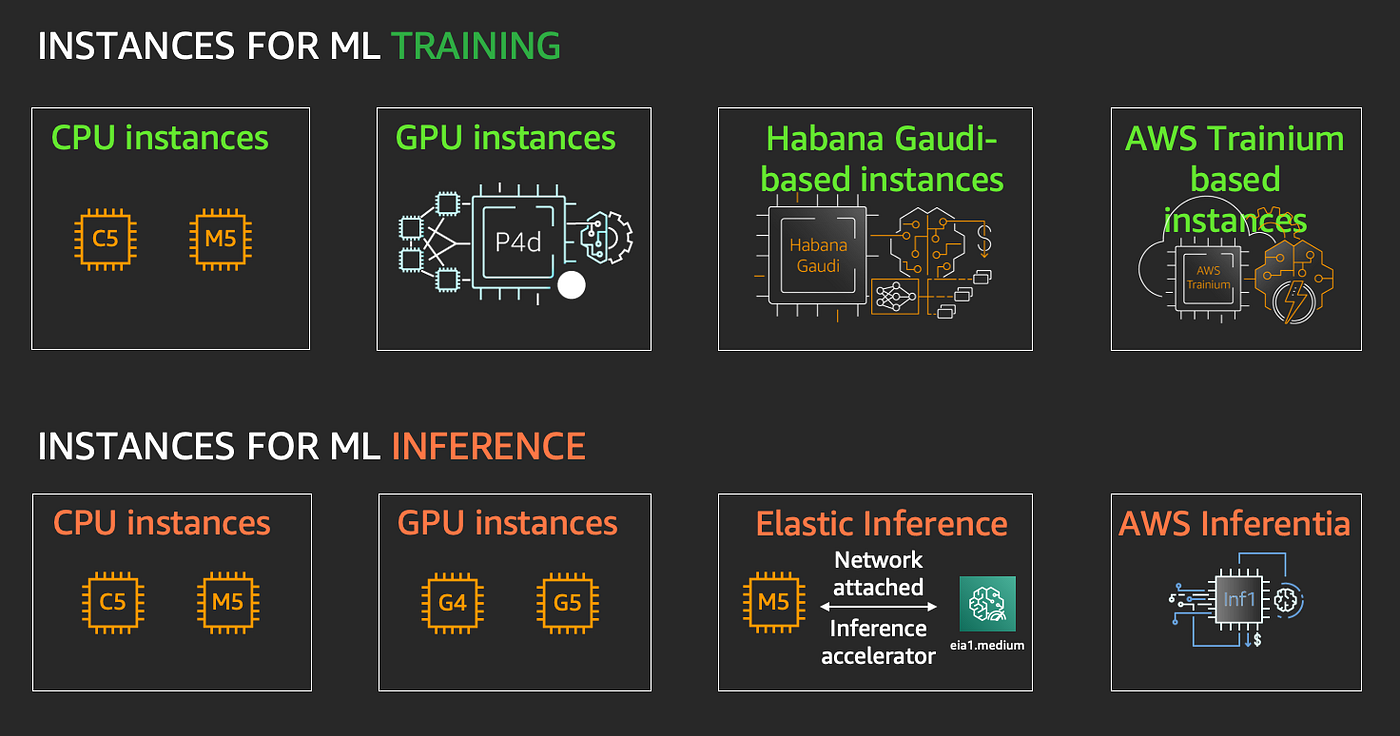

NEW LAUNCH!] Introducing Amazon Elastic Inference: Reduce Deep Learning Inference Cost up to 75% (AIM366) - AWS re:Invent 2018 | PPT

ECS] Attach Elastic Inference Accelerator directly to ECS tasks · Issue #484 · aws/containers-roadmap · GitHub

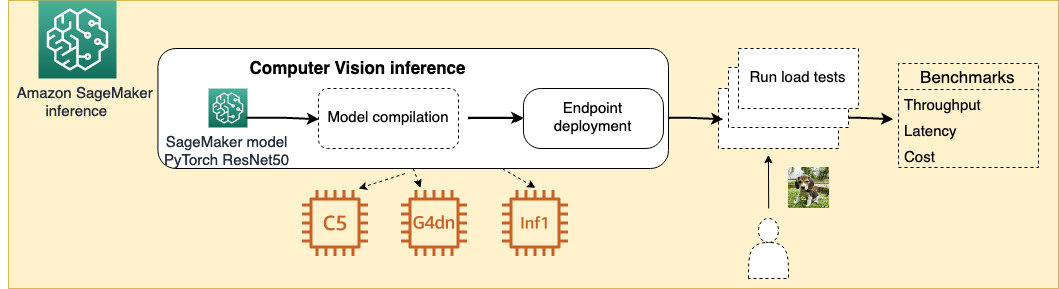

Choose the best AI accelerator and model compilation for computer vision inference with Amazon SageMaker | AWS Machine Learning Blog

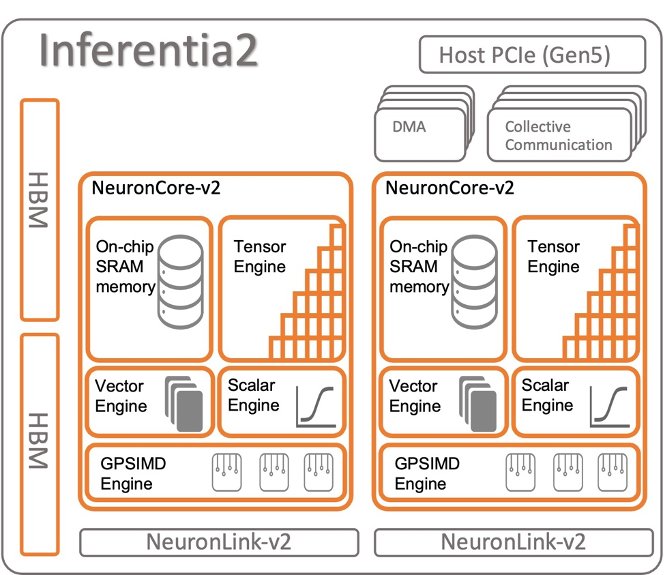

Achieve high performance with lowest cost for generative AI inference using AWS Inferentia2 and AWS Trainium on Amazon SageMaker | Data Integration

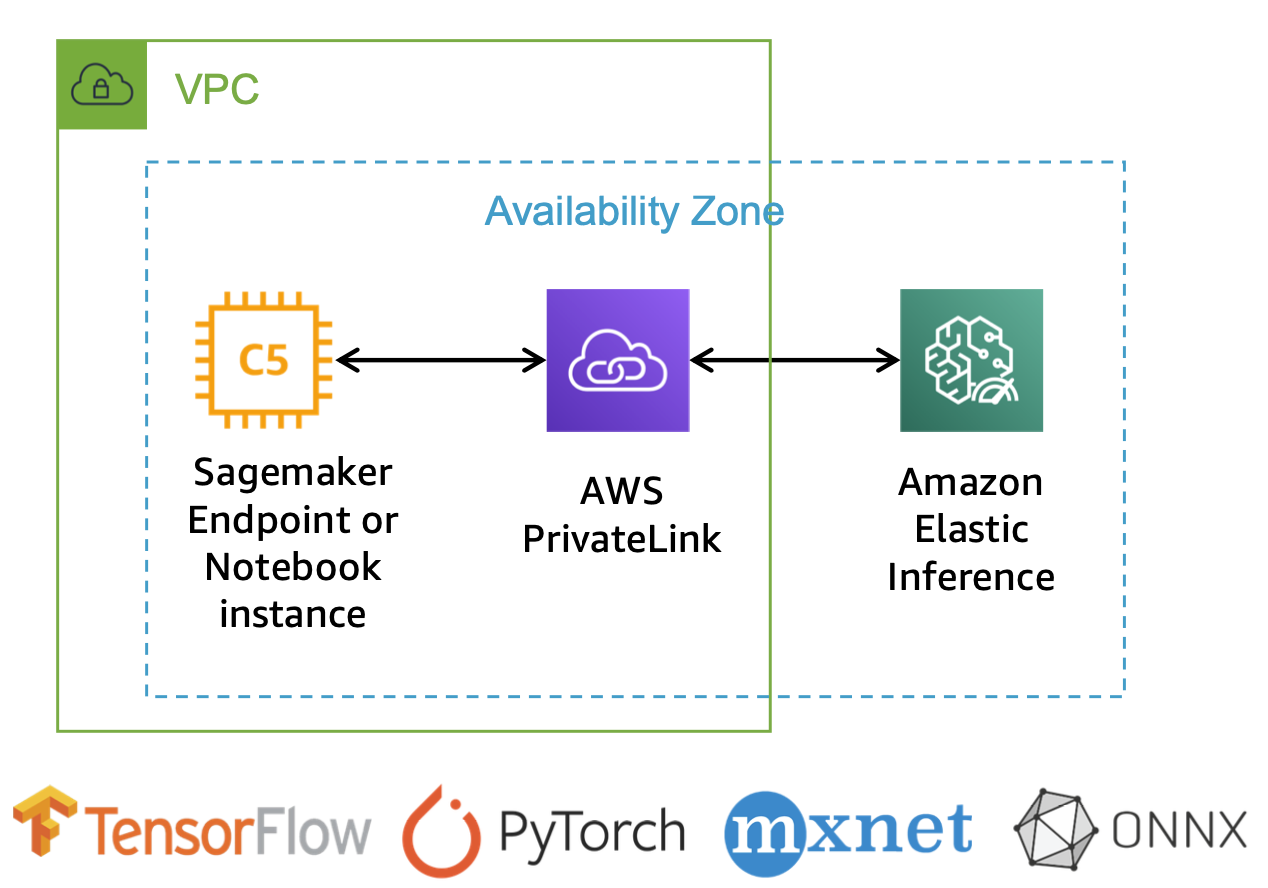

Optimizing TensorFlow model serving with Kubernetes and Amazon Elastic Inference | AWS Machine Learning Blog

Unable to Create AWS Segamaker, Error: The account-level service limit 'Number of elastic inference accelerators across all notebook instances.' - Stack Overflow

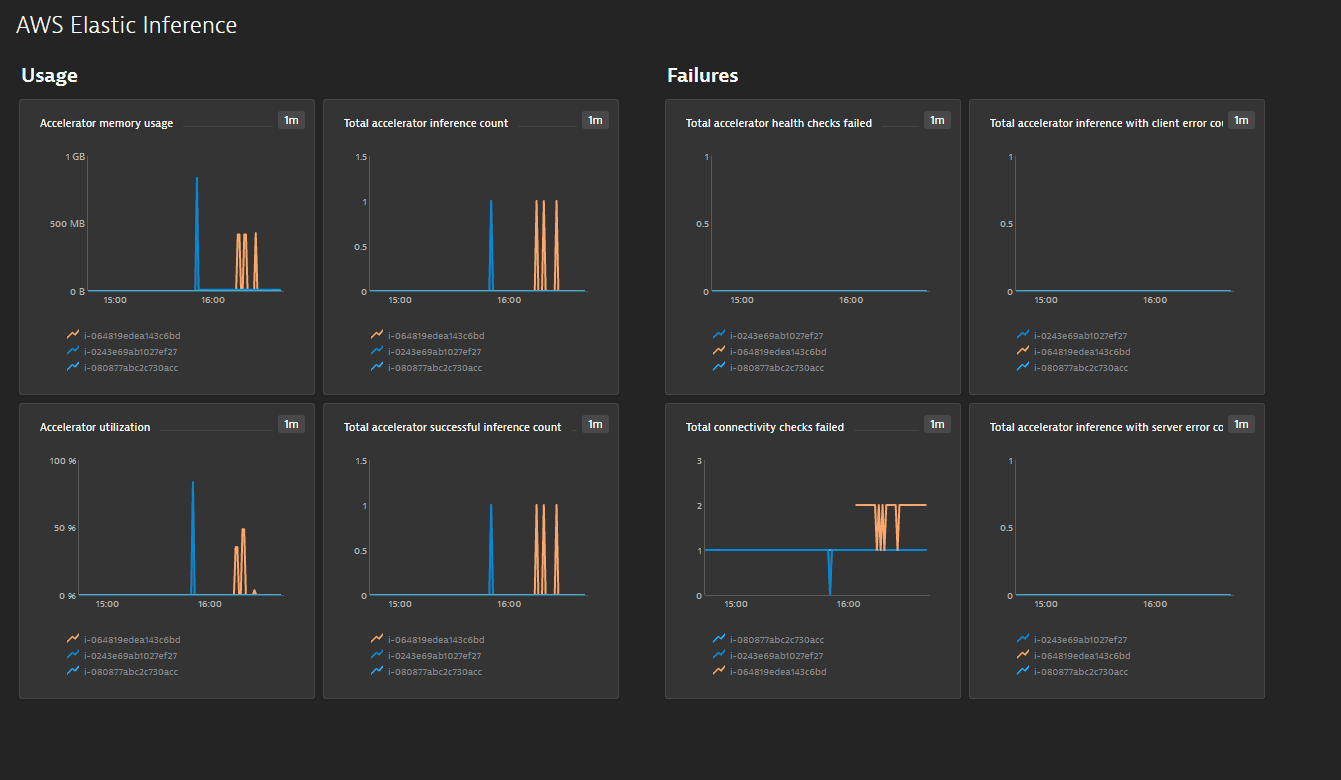

A complete guide to AI accelerators for deep learning inference — GPUs, AWS Inferentia and Amazon Elastic Inference | by Shashank Prasanna | Towards Data Science

![NEW LAUNCH!] Introducing Amazon Elastic Inference: Reduce Deep Learning Inference Cost up to 75% (AIM366) - AWS re:Invent 2018 | PPT NEW LAUNCH!] Introducing Amazon Elastic Inference: Reduce Deep Learning Inference Cost up to 75% (AIM366) - AWS re:Invent 2018 | PPT](https://image.slidesharecdn.com/new-launch-introducing-amaz-dc7595e2-98da-40f8-aaa2-895420541d29-457215190-181202043444/85/new-launch-introducing-amazon-elastic-inference-reduce-deep-learning-inference-cost-up-to-75-aim366-aws-reinvent-2018-16-320.jpg?cb=1667365044)

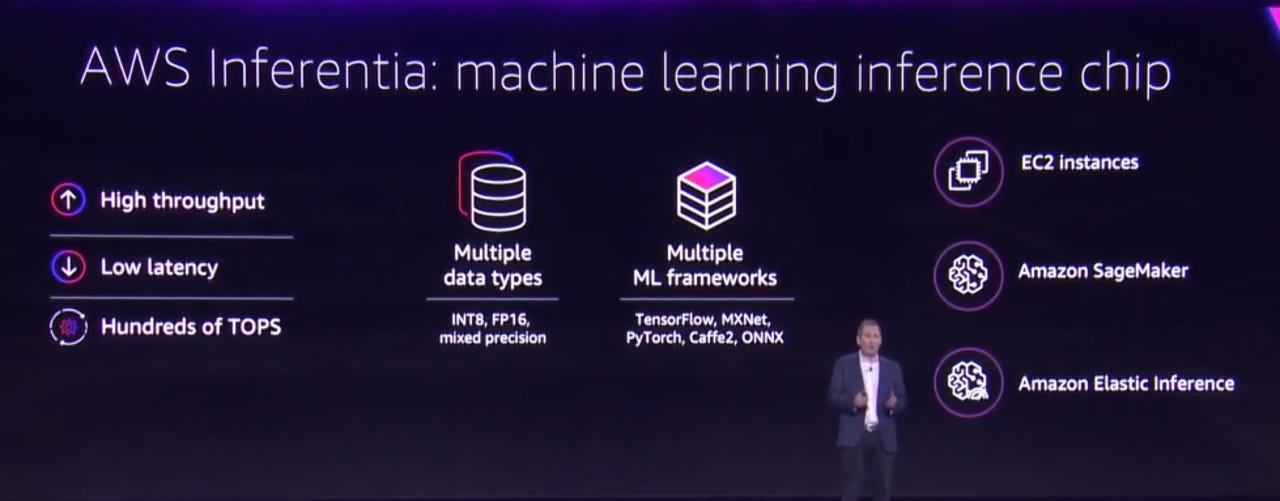

NEW LAUNCH!] Introducing Amazon Elastic Inference: Reduce Deep Learning Inference Cost up to 75% (AIM366) - AWS re:Invent 2018 | PPT

GitHub - aws-samples/aws-elastic-inference-tensorflow-examples: AWS Tensorflow Elastic Inference cost analysis blog post code. Notebook measures the timing of running object detection on a video locally v. Elastic Inference.

![AWS re:Invent 2018: [NEW LAUNCH!] Amazon Elastic Inference: Reduce Learning Inference Cost (AIM366) - YouTube AWS re:Invent 2018: [NEW LAUNCH!] Amazon Elastic Inference: Reduce Learning Inference Cost (AIM366) - YouTube](https://i.ytimg.com/vi/hqYjkT0BP1o/sddefault.jpg)

AWS re:Invent 2018: [NEW LAUNCH!] Amazon Elastic Inference: Reduce Learning Inference Cost (AIM366) - YouTube